|

He is a researcher in artificial intelligence and an amateur researcher in mathematics. he is working on Reasoning, AI safety and Multimodal. He is also a incoming visiting faculty, National University of Singapore. He was a visiting researcher in Carnegie Mellon University. He always supports Slow ScienceEmail: echo b3p0LmpAaWNsb3VkLmNvbQ== | base64 -d If you would like to join his group in any other capacity, please fill this form and then please send him a short email note without any documents. |

|

|

|

|

Oz T. Jang [paper] (Under reviewing) [code] An autonomous research agent that discovers, implements, and evaluates RL algorithms to improve mathematical reasoning, guided by metrics. 2025 |

|

Oz T. Jang [paper] (Writing) Propose combining RLHF with RLVR for improving Large Reasoning Model 2025 |

|

Oz T. Jang [paper] (Writing) Propose Double Zero Self-Evolving Large Reasoning Models without data, without SFT 2025 |

|

Oz T. Jang [paper] (Writing) Propose SFT-hybrid-RFT Large Reasoning Model 2025 |

|

Oz T. Jang A recent survey and proposal for Large Reasoning Model that paves the way to AGI. 2025 |

|

Ruijie Xie, Chaoyue Zhao, Oz T. Jang the first bilevel optimization algorithm that incorporates Lookahead's slow-fast weight mechanism for both model parameters and data weights, achieving comprehensive variance reduction. 2025 |

|

Oz T. Jang, Teng Yang, Xiaozhu Hu, Zi Yang, Chifong Wong Propose a Meta-learning optimization method named Lookahead-LSTM for improving generalization and data transferability. 2020 Spring |

|

|

|

Oz T. Jang, Wei Yuan Generating multi-view videos as a world state representation,combining VLMs with text-to-video models to obtain the ability of long-horizon decision making 2023 Winter |

|

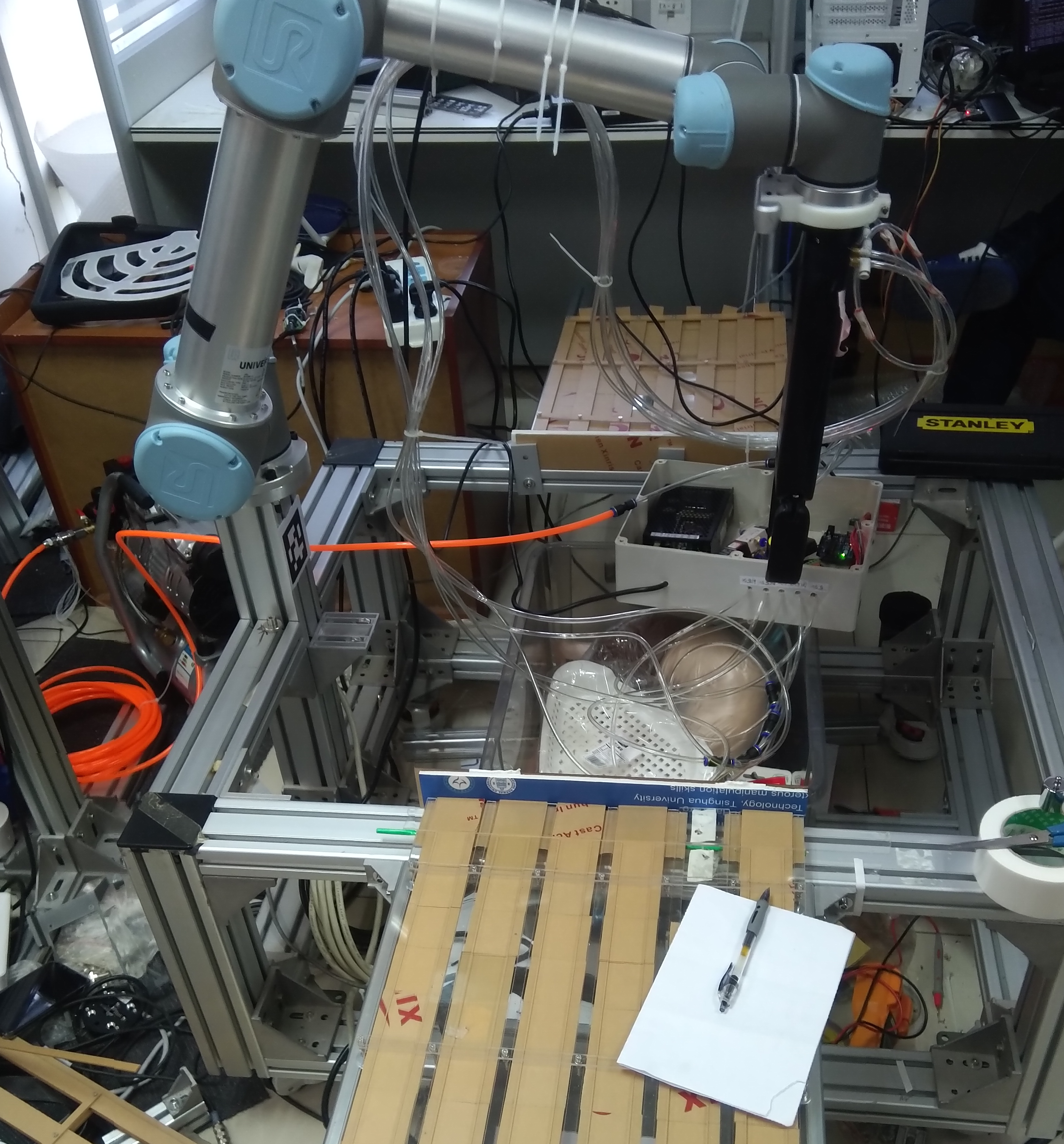

Oz T. Jang, Zhigang Li, Bowen Fu Merge object 6-DoF information and improve the performance of object localization for robotic manipulation 2019 Fall |

|

Starting January 2025, He has decided to commit 1~2 hours every week to provide guidance, suggestions, and/or mentorships for students from underrepresented groups or whoever is in need. Please fill in this form if you are interested. |

This template is a modification to Jon Barron's website template |